Prasun Baidya is a technology and product leader with extensive experience scaling high-performing engineering teams. He was most recently Head of Technology and Product at TrueCar, where he led the company’s technology strategy, product development, and platform innovation. Previously, he held senior engineering and product leadership roles at Patterson Companies, Inc. and Optum, driving large-scale digital transformation, modernizing enterprise platforms, and advancing data-driven product capabilities across complex healthcare and commerce ecosystems.

In our conversation, Prasun talks about how he leverages AI to add genuine value — focusing less on hype and more on clear ROI, adoption thresholds, and time to value. He shares how product leaders can evaluate where AI belongs in a company’s roadmap, and walks through real examples shaped by usage data and customer behavior. Prasun also outlines how to prioritize AI investments and use those resources effectively.

Evaluating AI opportunities through ROI

What’s your approach for evaluating an opportunity — specifically one involving AI? Which metrics do you want to see before you actually approve it?

For me, the AI revolution is real. The trend is evolving — it’s not linear, it’s exponential. Day to day, newer models are coming in, and there are real opportunities to leverage AI with significant advantages.

That said, when there’s hype around a technology like this, you hear people say, “Let’s put AI in everything we’re trying to build.” That’s a faulty concept. There are still limitations, so my advice is to not go in blindly. For me, it’s more about time to value.

When you start thinking about integrating AI into products, you have to remember that it takes time to build. There are significant time and monetary investments involved. The question is: what real value is this going to bring? Is it workforce efficiency? Will the user actually save time? You have to compare the work that goes into it with the outcome — the ROI gain for the user.

The second step is building a minimal viable product. Today, with prototyping tools like v0 by Vercel, you can build a prototype in a matter of hours and then validate it with customers. Is this something they want? Is it something they’ll actually use?

Lastly, instrumentation from day one is critical. When you deploy an MVP, you need to ask: is it providing value? How are customers using these AI capabilities? Are they seeing the benefits you predicted once it shipped to production?

When you perform this initial evaluation, is there a certain threshold that you look for to confirm if it’s worth the effort?

Yes. Every time you build something, you’re testing a hypothesis. In my opinion, you need a clear threshold upfront. For example, if we say the adoption threshold is 30 percent, and we ship an MVP, but adoption stays below that, then it’s probably not worth continuing. When you look at the ROI — the effort to build and maintain it versus how much it’s being used — it just doesn’t make sense to keep investing.

But if the hypothesis is that we’ll reach 30 percent adoption, and we actually hit that, and we can clearly see paths to grow further to 50 percent, then there’s real excitement. That’s when we should invest further — add features, iterate, and push adoption higher. The goal is always to move from 30 percent to 60 percent, then to 90 percent, or more.

Everyone needs a threshold because building anything costs money, time, and effort. If the data says adoption is low or customers don’t want the feature, we should stop. You might talk to 40 customers during early research, and maybe 30 of them say, “Yes, I love this. This will help our automation or accuracy.” But if you ship the MVP to a wider audience and adoption is still under 30 percent, then we should can it. The market is telling you something, and you have to listen.

How do you decide whether an AI capability belongs in a customer-facing product vs. internal tooling?

I think about this in two parts. AI can be internal-facing, customer-facing, or sometimes both. At the end of the day, the question is: what efficiency gain are you trying to achieve in each case? When you’re building internal tools, the lens is whether this helps your teams work more efficiently. For example, there are AI-native tools you can use for engineering teams — automatic code reviews, test case generation, and even GitHub Actions that help with automation. These are purely internal, but the output is measurable.

You can self-report some of it, but ideally, you measure it with data: how much time engineers are saving writing unit tests, documentation, or doing manual code reviews because these tools are mature enough to handle that work. That’s one aspect of it.

Then, when you’re building customer-facing products, it’s about understanding what problem you’re trying to solve and why. You still start with a quick MVP, based on market research, then put it in front of users and measure adoption. You ask, “Is this helping our customers? Is it expanding our customer base? Is it driving revenue, retention, or efficiency?” Those metrics are critical for me on both sides — internal and external — to decide whether an AI capability is actually successful.

Letting data challenge assumptions

Can you share an example of a time when you reversed an AI decision after seeing real usage data?

Early on, when large language models first emerged, we had an idea for a SaaS product we were building. The question was: how can we use LLMs to give better insights to consumers based on the data they’re seeing? One of the offerings was providing highly qualified, highly filtered leads. The idea was to give consumers a smaller number of these vetted leads so they didn’t have to sift through a large volume of low-quality ones. We believed this would result in higher conversion rates.

We built machine learning models and added RAG capabilities so that when a lead came in, the model evaluated things like user behavior, demographics, and time spent on the site. Based on that data, we classified leads as highly convertible or low quality and sent only the best ones to customers.

We implemented this quickly, but we had to pause it very soon after. The feedback was essentially, “You’re sending me fewer leads.” They cared more about volume than quality. They wanted to make more calls, even if conversion rates were low. What they weren’t factoring in was the time cost — calling 20 leads to convert one versus calling five leads and converting four. But that value wasn’t communicated well. We assumed people would immediately understand it, and that was a mistake.

So we paused, talked about it more, and rolled it out to a small group of more progressive customers first. They used it and saw the results. In some cases, they saw conversion rates of 80 percent, and then became advocates. They shared their success stories, and that helped us slowly reintroduce it to our broader customer base.

The lesson was that with new technology, you need pilots, proof points, and education. You can’t just roll something out because you think it’s great. Without that groundwork, you’ll get pushback.

What’s the smallest AI-powered change you’ve ever shipped that you felt really delivered an outsized impact, something maybe you weren’t even expecting?

I can share an internal example with coding agents. When these tools first came out, I thought, “This will immediately boost productivity.” We rolled it out to engineers and basically said, “Here’s a force multiplier. Productivity should skyrocket.” Well, that was a fallacy. My assumption was that our engineers had heard about the hype, played around with AI tools, and were so already comfortable with them that they’d start implementing the tool we developed.

That assumption was wrong. Honestly, I was dumbfounded when people started rejecting it — it’s a tool in your arsenal that you can use, so where was the hesitation? Adoption was extremely low — 1 or 2 percent. People didn’t trust the tool. Some directly said, “This is a bot. I don’t trust the quoted price.” Some tried it and felt they spent more time debugging AI-written code than writing it themselves.

So we stopped and listened. We asked why people weren’t using it. The feedback was consistent: lack of trust, fear of job replacement, and poor early experiences caused by weak prompts and lack of context.

To fix this, we focused on education. We formed a small pilot team — a “tiger team” — that believed in the tool. They documented best practices on how to write prompts, how to provide context, what a good cloud.md file should look like, and how to avoid garbage-in, garbage-out scenarios. After a couple of months, they had real results showing they were spending less time writing boilerplate code and more time designing systems, thinking about scalability, reliability, and security. They shared concrete examples and metrics.

Next, we did a roadshow across teams. The pilot team demonstrated how they used the tool, shared prompt templates, and explained what worked and what didn’t. That changed everything. Adoption went up to almost 50 percent. We also saw a big increase in AI-generated code successfully making it into production.

That was the biggest “aha” moment for me. Engineers weren’t coding less — they were just doing higher-quality work.

When AI stops being a differentiator

What specific signals tell you when an AI capability has shifted from being a competitive advantage to more of a commodity?

There are three signals I look for. First, is when open source catches up to about 80 percent of your capability. Once tools exist that can deliver most of what you built internally, your differentiation and competitive advantage evaporate really quickly. For example, an AI-powered search feature might have been a genuine differentiator in 2022. It could understand contexts, handle synonyms intelligently, and rank the results well. Suddenly, anyone can build a program 80 percent as good with modern AI tools. We might have spent years trying to build our algorithm, and now, it’s plug-and-play.

Second is when customers stop mentioning it in sales conversations. Early on, customers ask about the AI feature and lean in. Later, they just assume it exists. When they stop asking because it’s expected, it’s become a commodity — like having a mobile app today.

Third is when marginal investment produces diminishing returns. Early improvements are noticeable: accuracy jumps from 70 percent to 85 percent, workflows change, and users feel it. Later, you spend months going from 85 percent to 87 percent, and no one notices. That’s a clear signal that the capability has matured beyond the point where additional investment creates a competitive advantage.

At that point, we make a specific playbook shift and change the roadmap. We invest less in the core capability and more in the moat around it — integration into workflows, personalization, data flywheels, and vertical use cases. The value shifts from the AI itself to how it’s embedded.

You’ve mentioned that you’re an advocate of KISS prioritization. If you had to remove 70 percent of AI features from a roadmap tomorrow, how would you decide what stays?

I’m a big metrics and data guy, but I’d say it’s first important to be data-informed rather than purely data-driven. If adoption is flat — below the threshold we set — and there’s no clear path to grow it, we kill it. If a feature is at 40 percent adoption and trending upward, that’s something we double down on. Sometimes, data keeps you blind because you’re purely looking at numbers without context, so that perspective is important.

There’s also a qualitative side where we talk to users. We listen to sentiment. If adoption looks good but feedback is overwhelmingly negative, that’s a signal that something is wrong. I like to use the example of Word or Excel. Those products have hundreds of features, but most people use maybe five. Maintaining low-adoption features is expensive and often not worth it. The same applies to AI. Every feature should have a clear outcome, clear adoption, and clear value.

When you prioritize quantitative data, the numbers track very easily. But when you mix in qualitative data, how do you balance those signals?

Numbers alone don’t tell the full story. Adoption rates don’t explain why people love or hate a feature. That’s why I’m a strong believer in the voice of the customer. You segment users, talk to them directly, and understand sentiment. If adoption is high but sentiment is negative, you need to investigate. If adoption is moderate but sentiment is extremely positive, that might justify further investment.

Data can blind you if you don’t add context. The combination of quantitative metrics and qualitative insight is what leads to better decisions. As a product leader or engineering leader, that’s very important to me.

The core of an AI strategy

What’s your simplest mental model you employ to explain AI strategy to a group of executives who you need buy-in from?

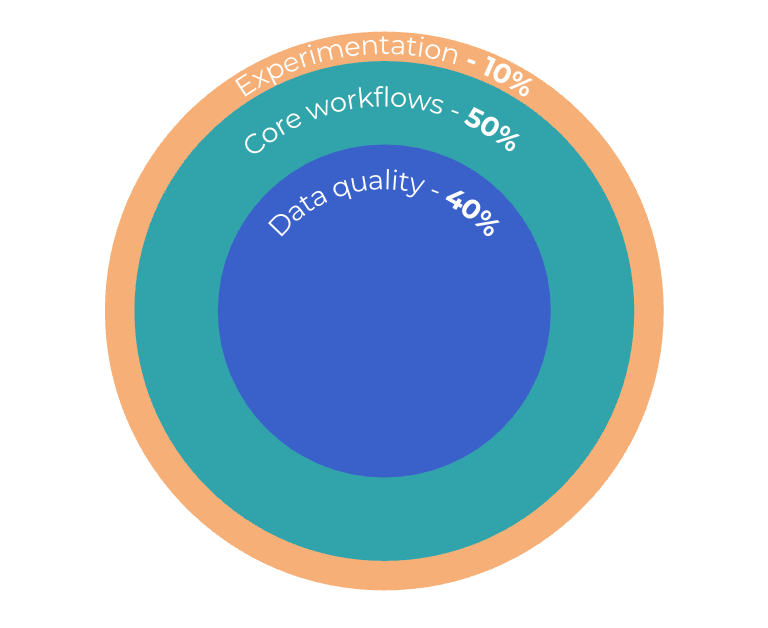

I use a three-layer framework. I literally draw it out on a whiteboard, and I’ve done this at multiple companies. I draw three circles. The inner circle is what I call “fix the plumbing.” If your data quality is poor or your infrastructure isn’t ready, AI won’t help. Garbage in, garbage out. About 40 percent of your investment should go here.

The middle circle represents the core workflows. This is where about 50 percent of your investment and energy goes, because this is where you get ROI — better efficiency, better customer experiences, better monetization.

The outer circle is experimentation. That’s 10 percent. Most experimentation will fail, but just one success can be transformational. Even if only 1 percent of users convert, that could be the change the company needs.

Executives or boards of directors often want to “sprinkle AI everywhere,” but strategy starts at the core. Without a solid foundation, nothing else works.

What does LogRocket do?

LogRocket’s Galileo AI watches user sessions for you and surfaces the technical and usability issues holding back your web and mobile apps. Understand where your users are struggling by trying it for free at LogRocket.com.